Hi,

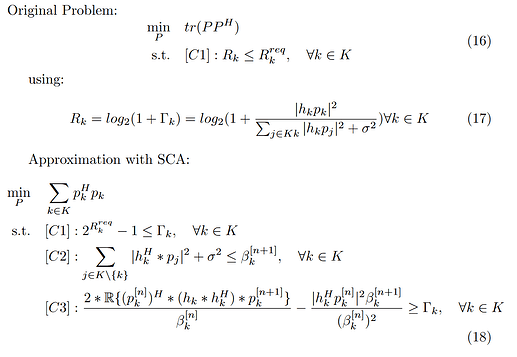

I have adopted a solution from this paper to solve a non-convex SDMA problem using SCA.

The original paper states that their SCA approximation should always converge to a local optimum, as only lower bound approximations are used.

I am pretty sure that my implementation should also have this property, as it uses exactly the same steps to construct the SCA problem.

“Convergence analysis: As (9), (11) and (12) are the lower bound approximations of (7d), (10a) and (8d), the optimal solution obtained in the [n − 1]-th iteration also serves as a feasible solution for the [n]-th iteration.”

The following is my Matlab implementation.

for cur_run = 1: max_runs

cvx_begin quiet

variable P_n1(NT,N_user) complex

variable beta_n1(N_user,1)

variable Gamma_n1(N_user,1)

expression interf_ray(N_user,1)

expression SINR_ray(N_user,1)

% Define optimization variable

trace_sum = 0;

vector_ray= [];

for k = 1:N_user

p_k_n1 = P_n1(:,k);

trace_sum = trace_sum+(p_k_n1'*p_k_n1);

end

% Construct constraints

for k = 1:N_user

p_k_n1 = P_n1(:,k);

p_k_n = P_n(:,k);

h_k = H(:,k);

beta_k_n1 = beta_n1(k);

beta_k_n = beta_n(k);

% Sums up interfering users

j_sum = 0;

% Subloop building interference sum

for j = 1:N_user

% Interfering user j

p_j_n1 = P_n1(:,j);

if(k == j)

continue

else

% Note: We need to use square_pos here, which

% corresponds to: x+=max{0,x}.

% Regular square does not work

j_sum = j_sum + square_pos(abs(h_k'*p_j_n1));

end

end

% Array representing interference term

interf_ray(k,1) = j_sum + sigma_n^2;

% Array representing signal of interrest term

SINR_ray(k,1) = 2*real(p_k_n'*(h_k*h_k')*p_k_n1)/beta_k_n ...

- (square_pos(abs(h_k'*p_k_n))*beta_k_n1)/(beta_k_n^2);

end

% Define optimization problem to solve

minimize(trace_sum)

subject to

interf_ray <= beta_n1;

SINR_ray >= Gamma_n1;

2.^(R_req.')-1 <= Gamma_n1 ;

cvx_end

% Prepare for next iteration

beta_n = beta_n1;

P_n = P_n1;

cur_sol = cvx_optval;

if(abs(cur_sol - last_sol) <= e)

break;

else

last_sol = cur_sol;

end

end

However, this implementation works fine in CVX version 1.22 (Downloaded from the CVX website), using SDPT3 for random parameters.

But in CVX version 2.2.2 (Downloaded from Github), the implementation does not converge for my initial values (also taken from this paper), using SDPT3 or SeDuMi. I have also tried to tweak the parameters (i.e. initial values for P_n , beta_n or h or stricter/more lenient requirements for R_req), which did not help. I was unable to find a single converging case.

I have also tried CVX version 2.2 (Downloaded from the CVX Website (Standard bundle)) and tried using MOSEK, which also did not converge.

Does anyone had a similar problem recently and found a solution? Or does anyone see a problem with my model being incorrect and thus not converging?