You haven’t shown the complete code which triggered the error message.

b, which you don’t’ show, is apparently log-affine. It needs to be affine. the 2nd argument of rel_entr needs to be concave, but is apparently log-convex.

In light of these argument types which are not allowed for rel_entr, are you sure your optimization problem is convex?

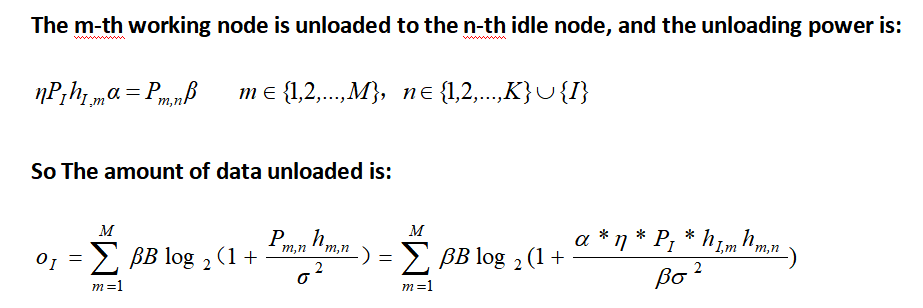

Presuming all the symbols in the amount of data unloaded are constants (input data), except for \beta and one of the symbols in the numerator, that expression can be formulated using

x*log(2(1+y/x) = -rel_entr(x,x+y)/log(2). with suitable choices of x and y` to place into this form.

help rel_entr

rel_entr Scalar relative entropy.

rel_entr(X,Y) returns an array of the same size as X+Y with the

relative entropy function applied to each element:

{ X.*LOG(X./Y) if X > 0 & Y > 0,

rel_entr(X,Y) = { 0 if X == 0 & Y >= 0,

{ +Inf otherwise.

X and Y must either be the same size, or one must be a scalar. If X and

Y are vectors, then SUM(rel_entr(X,Y)) returns their relative entropy.

If they are PDFs (that is, if X>=0, Y>=0, SUM(X)==1, SUM(Y)==1) then

this is equal to their Kullback-Liebler divergence SUM(KL_DIV(X,Y)).

-SUM(rel_entr(X,1)) returns the entropy of X.

Disciplined convex programming information:

rel_entr(X,Y) is convex in both X and Y, nonmonotonic in X, and

nonincreasing in Y. Thus when used in CVX expressions, X must be

real and affine and Y must be concave. The use of rel_entr(X,Y) in

an objective or constraint will effectively constrain both X and Y

to be nonnegative, hence there is no need to add additional

constraints X >= 0 or Y >= 0 to enforce this.